Many B2B marketing managers face the same frustration: inconsistent conversion results despite steady traffic. Without a systematic approach, optimization becomes guesswork rather than strategy. A structured, data-driven conversion rate optimization workflow transforms how you identify opportunities, test hypotheses, and validate improvements. This guide walks you through the essential stages of preparing, executing, and verifying an effective CRO workflow that delivers measurable results for your business.

Table of Contents

- Key takeaways

- Preparing your conversion rate optimization workflow

- Executing the conversion rate optimization workflow step-by-step

- Verifying results and avoiding common CRO pitfalls

- Enhance your CRO strategy with expert digital marketing services

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Data driven CRO process | A structured workflow uses data to identify opportunities, test hypotheses, and validate improvements. |

| Clear goals and data | Defining specific, measurable conversion goals linked to business objectives plus gathering user data informs actionable optimization. |

| Audience mapping and baselines | Analytics tools including heat maps and session recordings reveal entry points, drop offs, and friction to guide tests. |

| Prioritize optimization opportunities | Rank opportunities by potential impact and ease of implementation to focus on high traffic pages and critical funnel steps. |

| Roles and calendar | Assign clear ownership for analysis, design, implementation, and results tracking and maintain a centralized testing calendar to prevent overlap and capture learnings. |

Preparing your conversion rate optimization workflow

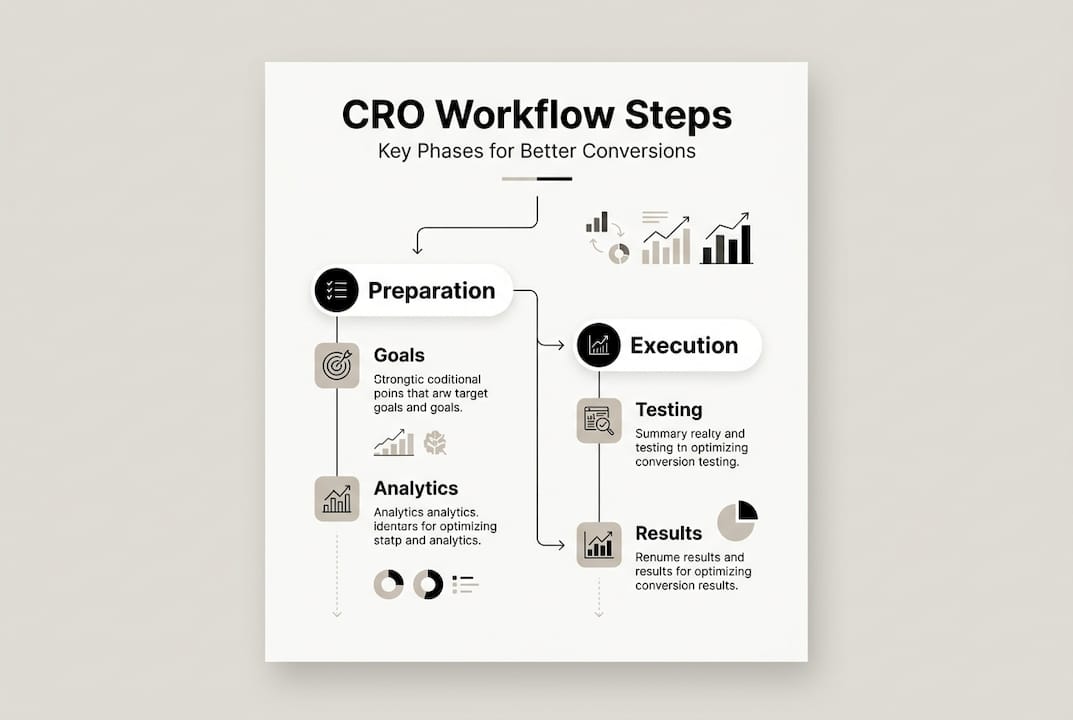

Your CRO workflow starts long before you run your first test. Starting CRO with clear goals and user data drives actionable insights that separate successful optimization from random changes. Begin by defining specific, measurable conversion goals tied directly to business objectives. Whether you’re targeting form submissions, demo requests, or trial signups, quantify what success looks like with exact numbers and timeframes.

Next, map your target audience behavior patterns using analytics tools. Identify where users enter your funnel, where they drop off, and which pages show the highest exit rates. Heat maps reveal what captures attention while session recordings expose friction points in the user journey. This data becomes your foundation for hypothesis generation.

Collect baseline metrics from your current performance before making any changes. Document conversion rates, average order values, bounce rates, and time on page for key landing pages. These benchmarks let you measure improvement accurately and prove ROI to stakeholders.

Prioritize optimization opportunities based on potential impact and implementation difficulty. High traffic pages with poor conversion rates offer quick wins. Complex checkout processes with multiple drop-off points need deeper investigation. Use this prioritization framework:

- Pages with highest traffic volume and lowest conversion rates

- Critical funnel steps where users abandon most frequently

- Elements that directly influence purchase decisions

- Changes requiring minimal development resources

Prepare your tools and team roles before launching tests. Assign clear ownership for data analysis, test design, implementation, and results tracking. Ensure everyone understands the workflow stages and their responsibilities.

Pro Tip: Create a centralized testing calendar that prevents overlapping experiments and documents all hypothesis tests, results, and learnings for future reference.

Establish your testing infrastructure with proper analytics tracking, experiment platforms, and documentation systems. Your conversion rate optimization strategies require reliable data collection from the start. Implement event tracking for micro-conversions that indicate user intent even when they don’t complete primary goals. Set up custom dashboards that surface relevant metrics quickly so you can monitor test performance in real time.

Finally, audit your current conversion funnel for technical issues that might skew results. Slow page loads, broken forms, or mobile responsiveness problems need fixing before you attribute performance changes to optimization tests. These proven CRO strategies ensure you’re building on solid technical foundations.

Executing the conversion rate optimization workflow step-by-step

With preparation complete, you’re ready to execute systematic testing that drives measurable improvements. Iterative testing with a structured approach drives the most effective CRO improvements by following proven methodologies rather than gut instinct. Start by analyzing your baseline data to generate specific hypotheses about what will improve conversions.

Your hypothesis should follow this format: “Changing [element] from [current state] to [new state] will increase [metric] because [reason based on data].” This structure forces you to articulate clear reasoning and expected outcomes. For example: “Changing the CTA button color from blue to orange will increase click-through rate by 15% because heat maps show users focus on that screen area and orange creates stronger contrast.”

Follow these execution steps in order:

- Prioritize hypotheses using an impact versus effort matrix, focusing first on changes that promise significant gains with reasonable implementation costs

- Design your test with proper control and variation groups, ensuring you isolate the variable you’re testing from other page elements

- Calculate required sample size and test duration to achieve statistical significance, typically aiming for 95% confidence levels

- Implement the test using your chosen platform, double-checking that tracking fires correctly for both control and variation

- Monitor test progress without making premature decisions, letting tests run their full planned duration even if early trends look promising

- Analyze results only after reaching statistical significance, examining both primary conversion metrics and secondary indicators

- Document everything including test design, implementation details, results, and insights gained regardless of outcome

- Roll out winning variations to all traffic and measure sustained performance over the following weeks

Pro Tip: Run tests during typical business cycles to capture representative user behavior. Avoid launching major tests during holidays, sales events, or other anomalous periods that skew normal patterns.

Your testing approach should balance speed with rigor. A/B tests work well for comparing two distinct variations of a single element. Multivariate tests let you examine multiple elements simultaneously but require substantially more traffic to reach significance. Most B2B sites benefit from sequential A/B testing that builds knowledge incrementally.

| Test Type | Best For | Traffic Needed | Complexity |

|---|---|---|---|

| A/B Test | Single element changes | Moderate | Low |

| Multivariate | Multiple simultaneous changes | High | High |

| Split URL | Completely different page designs | Moderate | Medium |

| Multi-page | Full funnel optimization | High | High |

When you optimize landing pages, test elements in order of expected impact. Headlines and primary CTAs typically influence conversions more than footer links or tertiary content. Focus your early tests on above-the-fold elements that every visitor sees.

Implement winning variations systematically across relevant pages. A successful headline test on one landing page might apply to similar pages targeting the same audience segment. Create templates and guidelines that scale your learnings without requiring individual tests for every page variation.

Iterate continuously rather than treating optimization as a one-time project. Each test generates insights that inform your next hypothesis. Failed tests teach you what doesn’t work, narrowing the solution space for future experiments. Professional conversion rate optimization services maintain this continuous testing cadence as core practice.

Verifying results and avoiding common CRO pitfalls

Running tests means nothing without rigorous verification that your results reflect genuine improvement rather than random variation. Statistical significance confirms that observed differences between control and variation exceed what chance alone would produce. Never implement changes based on incomplete tests or marginal confidence levels.

Track key performance indicators beyond your primary conversion metric. Revenue per visitor matters more than conversion rate if your variation attracts lower-quality leads. Time to conversion reveals whether changes accelerate decision-making. Return visitor rates indicate whether improvements create lasting positive impressions.

Compare your results against these verification criteria:

- Statistical significance reaching at least 95% confidence level

- Minimum sample size met for both control and variation groups

- Test duration covering at least two full business cycles

- Consistent performance across different traffic sources and device types

- Secondary metrics showing no negative impacts on user experience

Avoid these common mistakes that undermine CRO workflows. Testing too many elements simultaneously makes it impossible to identify which specific change drove results. Valid CRO measurement relies on KPIs aligned to goals and statistical significance rather than vanity metrics that look impressive but don’t connect to business outcomes.

Ignoring external factors leads to false conclusions. Traffic spikes from PR coverage, seasonal buying patterns, or competitor actions all influence conversion rates independently of your tests. Document these external events and consider their potential impact when interpreting results. If a major industry event coincides with your test period, extend the test duration or restart after conditions normalize.

Testing too many variables or ignoring significance leads to flawed CRO results that waste resources and damage credibility. Stick to testing one variable at a time unless you have traffic volumes that support multivariate approaches. Even then, limit the number of combinations to maintain statistical power.

Balance quick wins against long-term strategy in your optimization roadmap. Simple changes like button colors or headline tweaks often show fast results but limited impact. Deeper improvements to value propositions, page structure, or funnel design require more effort but deliver sustained gains.

| Approach | Implementation Time | Expected Impact | Sustainability |

|---|---|---|---|

| Quick wins | 1-2 weeks | 5-15% lift | Moderate |

| Tactical improvements | 1-2 months | 15-30% lift | High |

| Strategic redesigns | 3-6 months | 30-50%+ lift | Very high |

Review your entire workflow regularly to identify bottlenecks and inefficiencies. Are hypotheses sitting untested because implementation takes too long? Does your team struggle to reach statistical significance due to insufficient traffic? Do winning tests get stuck in deployment queues? Each friction point slows your optimization velocity and delays revenue impact.

When you measure marketing ROI, connect CRO improvements directly to revenue outcomes. Calculate the dollar value of each percentage point conversion increase based on your average customer lifetime value. This financial framing helps secure resources and executive support for ongoing optimization efforts.

Document learnings in a centralized knowledge base that your entire team can access. Include failed tests since negative results prevent wasting time on similar approaches later. Tag insights by page type, audience segment, and element tested so you can quickly reference relevant precedents when designing new experiments. This cro agency checklist approach builds institutional knowledge that compounds over time.

Enhance your CRO strategy with expert digital marketing services

Building and maintaining an effective CRO workflow requires specialized skills, dedicated resources, and continuous attention. Professional conversion rate optimization services accelerate your results by bringing experienced practitioners who’ve optimized hundreds of funnels across industries. They identify opportunities faster, design more effective tests, and help you avoid costly mistakes that set programs back months.

Integrating CRO with broader digital marketing creates compounding benefits. B2B digital marketing services align your optimization efforts with content strategy, paid acquisition, and account-based marketing for cohesive customer experiences. When your SEO drives qualified traffic to optimized landing pages, conversion rates multiply the value of every organic visit. This SEO strategy guide shows how technical foundations support both discoverability and conversion performance simultaneously.

Frequently asked questions

What is a conversion rate optimization workflow?

A conversion rate optimization workflow is the systematic, repeatable process you follow to increase the percentage of visitors who complete desired actions on your website. It encompasses preparation stages where you set goals and gather data, execution phases involving hypothesis testing and implementation, and verification steps that confirm improvements through statistical analysis. This structured approach replaces ad hoc changes with data-driven optimization that compounds results over time.

How do you measure success in a CRO workflow?

Success measurement relies on tracking metrics directly tied to your conversion goals, including conversion rate, click-through rate, revenue per visitor, and customer acquisition cost. Valid CRO measurement relies on KPIs aligned to goals and statistical significance rather than directional trends that might reflect random variation. Always confirm results reach at least 95% confidence before implementing changes broadly, and monitor sustained performance over multiple weeks to ensure improvements persist beyond the initial test period.

What are common mistakes to avoid in CRO workflows?

The most damaging mistakes include testing multiple variables simultaneously without sufficient traffic to isolate individual effects, stopping tests prematurely before reaching statistical significance, and ignoring external factors like seasonality or market changes that influence results independently. Testing too many variables or ignoring significance leads to flawed CRO results that waste development resources and erode stakeholder confidence. Additionally, failing to document test designs and results prevents your team from building on past learnings and often leads to repeating failed experiments.

How often should I review and update my CRO workflow?

Review your workflow after completing each test cycle to identify process improvements and bottlenecks that slow optimization velocity. Conduct more comprehensive quarterly reviews that examine your prioritization framework, tool effectiveness, team skill gaps, and overall program ROI. Adapt your workflow based on results, market changes, competitive pressures, and new testing methodologies that emerge in the industry. As your traffic grows and team capabilities expand, you can tackle more sophisticated testing approaches that weren’t feasible earlier. The most effective CRO programs treat the workflow itself as an optimization target, continuously refining how they identify opportunities, design experiments, and scale winning variations.